Transcription Is Hugely Important, and Here’s Where It’s Headed in the Near Future

It may not be on your radar, but transcription is a hugely important service to thousands of businesses. Here are some of the major changes happening in the transcription industry.

If you don’t spend a lot of your free time thinking about transcription, don’t worry. It’s a safe bet you’re not alone.

Transcription, however, the process by which someone (or something) converts audio to text, is a vital tool in making a lot of modern society easier to understand.

To some, that might seem a bit counterintuitive. After all, ours is a culture that is heavily reliant on audio and video mediums, so why do we need text to clarify it.

Well, we suggest watching a handful of YouTube videos, and then ask yourself just how helpful it would be to have a written document of everything voiced in the clip.

Why Does Transcription Matter?

Transcription, however, serves a much larger and more important purpose than that of deciphering your favorite viral videos.

Along with creating a written record of a video or audio presentation, transcription assists in cleaning (and clearing) up what’s spoken.

Language We Can All Understand

Consider all of the different accents that populate the United States. From north to south and along the coasts from east to west, there exists a vast variance in dialects in our country.

Now, examine the rest of the world.

No doubt, there are times when watching a British show on Netflix that flipping on the closed captions infinitely improves your enjoyment of the show.

In addition to cutting through thick accents or unfamiliar inflections, transcription also gets to the point of what’s said.

Take for example an online presentation or training class where the content is purposeful, but the individual presenting is not as polished.

Transcription removes the personal ticks or audible distractions that might muddle the verbal elements of a presentation. It eliminates the stumbles or long pauses that occur when the presenter gets a drink of water or needs to collect their thoughts.

Transcription removes the personal ticks or audible distractions that might muddle the verbal elements of a presentation.

In some cases, it’s necessary to include some of those non-verbal cues, to ensure the intended meaning from a speech or class is the same on the page as it was live. Either way, transcription can ensure whatever the message, it is recorded accurately.

Not Lost, but Found in Translation (and Transcription)

And yes, transcription is also very much about translation.

There is a funny scene in the 2003 movie Lost in Translation in which Bill Murray’s character is shooting a commercial in Japan and the highly animated director – speaking Japanese – is more than a bit verbose with his direction.

While the exchange is played up to full comedic effect, it highlights the usefulness that transcription would produce.

Certainly, a more forthcoming translator would have been helpful, but so too would have a clear transcription. One where Bill Murray’s character could ignore the flourishes of the director (and the misinterpretations of the translator), and instead focus on the actual instructions given.

Transcription takes the translation of a sophisticated foreign language, cuts through its unfamiliarity and provides the simplest form of what someone may be telling you.

Some languages may require 20 words to say what might take us only five to express, or vice versa. Ultimately, it gives you the ability to communicate efficiently regardless of what’s communicated.

Accessing the Once Unaccessible

The final purpose that transcription serves is arguably the most critical – accessibility.

Communication is of vital importance to an open society. Unfortunately, some people are limited in their ability to hear or possess cognitive disabilities that require information be written for them to absorb it.

Even for those that are visually impaired, Braille is a form of transcription – taking previously inaccessible text and placing it literally at their fingertips.

Businesses that make audio or visual content available in a text format open themselves to a much broader base of customers. Doing the same for in-house materials also ensure a company avoids violation of workplace discrimination standards.

Educationally, transcription (and more specifically, old-school note taking) offers a number of avenues to help students learn.

As we already noted, those with cognitive disabilities can see benefits from transcription. So too can those with less severe conditions such as Attention Deficit Disorder, Dyslexia or other learning impairments.

But transcribing a lecture or classroom discussion will also ensure that any student, regardless of ability or intelligence, benefits.

Oddly enough, because this type of transcription involves a pupil putting the content into their own words, it proves a more effective manner for them to learn and absorb the material. Particularly if they put away the laptop and pick up a pencil.

Cutting Through The Clutter

Cleaning up the verbal debris that comes with any language and providing access to that language are not the only reasons transcription is essential.

It also produces a clear record of interactions that we might need to reference later on.

Consider times when you’ve used the chat help function for customer support. Often, once the interaction is complete, you receive an offer for a transcript of the chat. Helpful if you need to refer to back to it in the future.

Imagine how valuable that transcript is after a customer service phone call – or any call you conduct with another company. As much as we rely on chats, text, and email, or knowledge centers to solve issues, the telephone remains the preferred contact method for many.

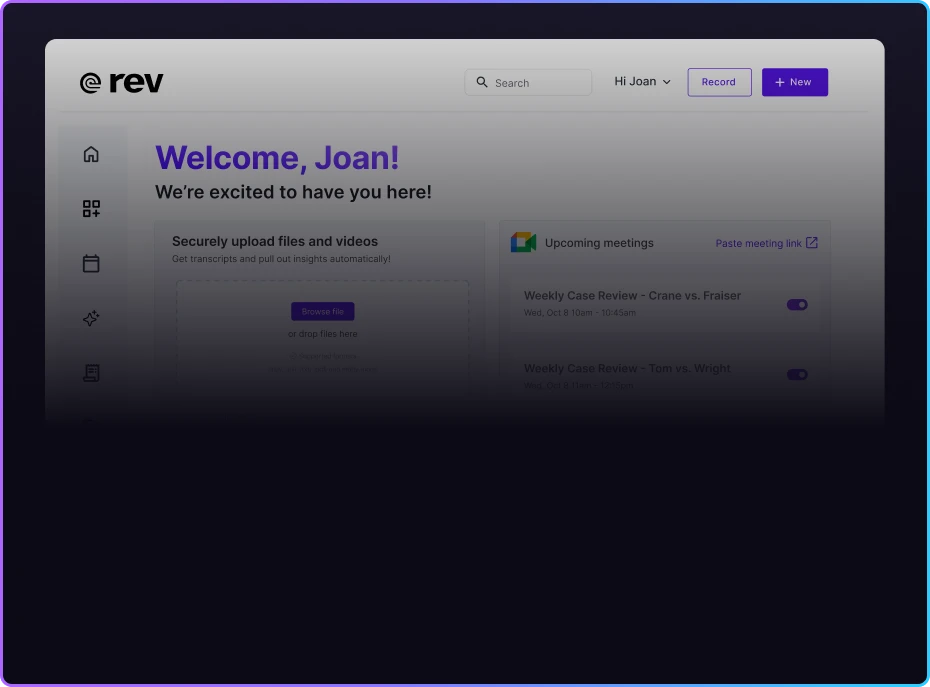

Tip: Want to easily record and transcribe your calls? We built an app for that.

Download Rev Call Recorder App for Free

Beyond just having a record, the transcript you receive is also easier to search.

If you’ve ever attempted to search through an audio or video file for a specific piece of data, then you understand how cumbersome that exercise can be. Even when you find it, you still have to extract it, and yes, transcribe it.

And though technology is as ubiquitous and reliable as ever, possessing an “offline copy” of a video presentation, webinar or similar video-based demonstration can save you in times when the tech doesn’t work, or your audience wants a hard copy.

Businesses that make audio or visual content available in a text format open themselves to a much broader base of customers.

But really, aside from a few cursory instances, what companies would use such a low tech method of documentation?

As it turns out, plenty.

Consider the need for medical procedures, prescriptions or doctor’s instructions to be documented for both the patient, their medical file, and an insurance carrier. An improper collection of notes could endanger the wellbeing of a patient or negatively impact their medical costs.

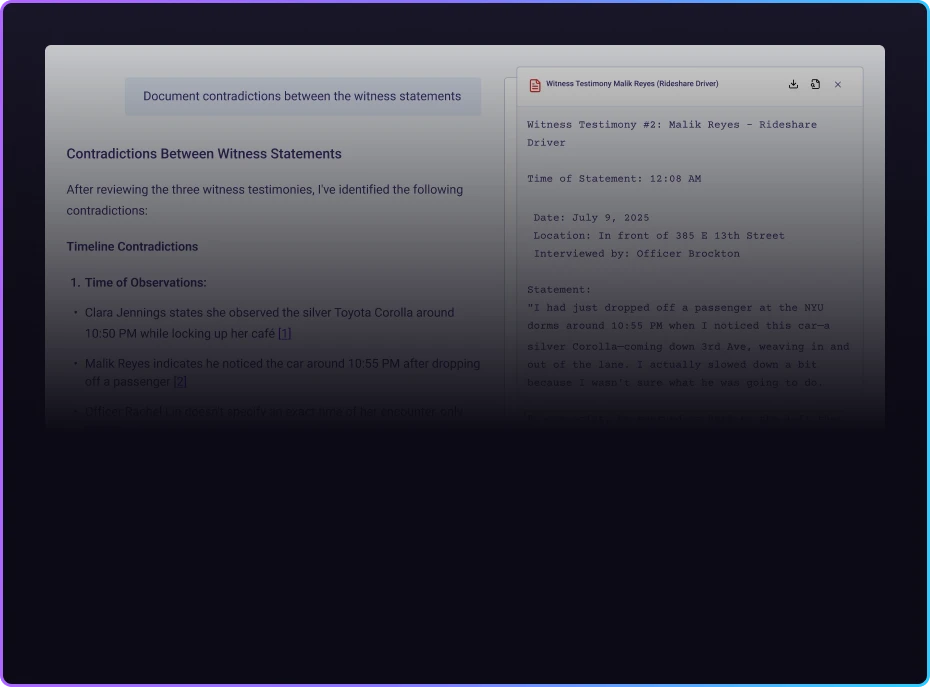

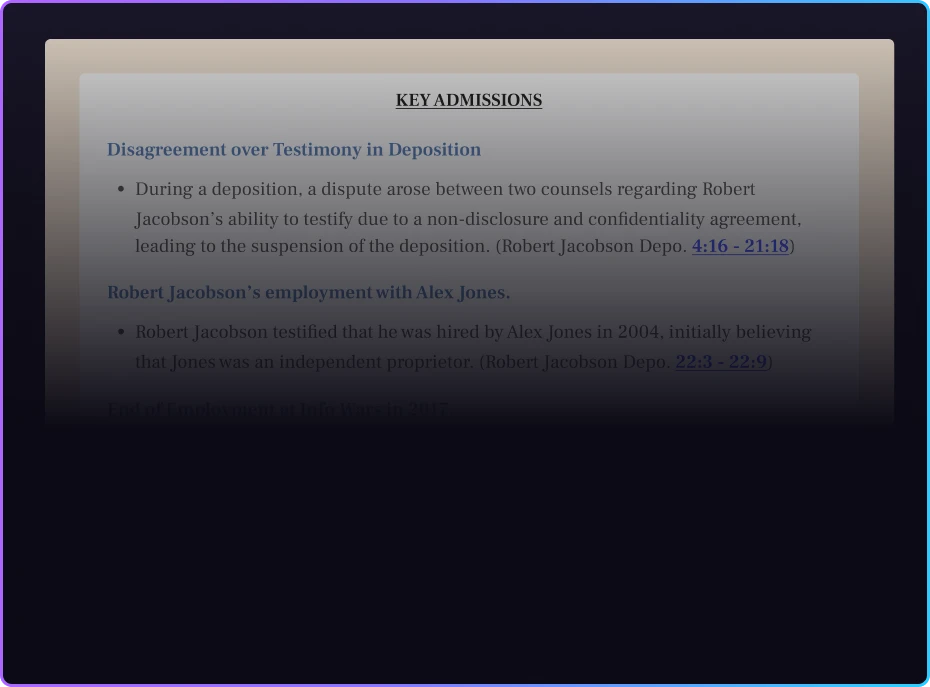

The other industry that’s heavily reliant on transcription is the legal field.

When you realize the intricacies of legalese, the repercussions of getting it wrong could be huge. Transcription ensures that regardless of proceedings, everyone remains on the same page.

Other industry examples include market studies or scientific research, as well as the previously mentioned areas of education, business presentations and training, and customer service.

A New Frontier of Transcription

On the surface, transcription may seem reasonably straightforward, and by all accounts it is. However, new advances are pushing the envelope and forcing us to reconsider transcription as something well beyond a simple text to speech concept.

One such example turns the whole idea of transcription on its head, by an actual turn of the head. Indeed a very different frontier.

A team of researchers at the renowned Massachusetts Institute of Technology (MIT) created a device capable of transcribing internalizations – basically things you say internally but don’t say out loud – and turning them into words.

The system, called AlterEgo, uses electrodes to “read” neuro muscles in your face and jaw, transmitting those signals to an AI instructed to match specific signals and words together. AlterEgo also makes use of bone-conduction headphones designed to push information to the user’s inner ear.

Arnav Kapur, a graduate student helping to lead the development group, notes the catalyst for the project “was to build an IA device — an intelligence-augmentation device. Our idea was: Could we have a computing platform that’s more internal, that melds human and machine in some ways and that feels like an internal extension of our own cognition?”

While the tech is undoubtedly next-level and mirrors devices so far only seen in sci-fi, the potential applications are deeply rooted in modern-day practicality.

Georgia Tech College of Computing professor Thad Starner highlights several scenarios where the AlterEgo system makes sense.

He first points to the ground crew that direct planes and handles baggage at airports. “You’ve got jet noise all around you, you’re wearing these big ear-protection things — wouldn’t it be great to communicate with voice in an environment where you normally wouldn’t be able to?”

Starner says that “the flight deck of an aircraft carrier, or even places with a lot of machinery, like a power plant or a printing press,” provide other viable locations for the tech.

He also adds that even someone who’s lost the ability to speak, could still, theoretically, maintain some measure of their voice.

Right now, the system remains a work in progress with the research group collecting data and working to improve AIs knowledge base.

When it comes to transcription, that can only mean one thing – the ability to transcribe more words.