Educational Technology Trends Shaping Higher Education

Edtech is no longer just a competitive advantage. For many institutions, it's a legal necessity. Learn more about the biggest trends shaping the industry.

16 Fourth Of July Speeches You Need To Know

Looking for patriotic inspiration? Read transcripts of the 16 best Fourth of July speeches to see how past presidents and leaders celebrated Independence Day.

20 Commencement Speech Examples That Inspired Generations

From Steve Jobs to Sheryl Sandberg, here are 20 of the best commencement speeches of all time. Plus, get tips on how to write a graduation speech of your own.

Video Accessibility Checklist For Schools + Universities

Learn how to make your educational video content accessible to all students and download our free video accessibility checklist to ensure ADA compliance.

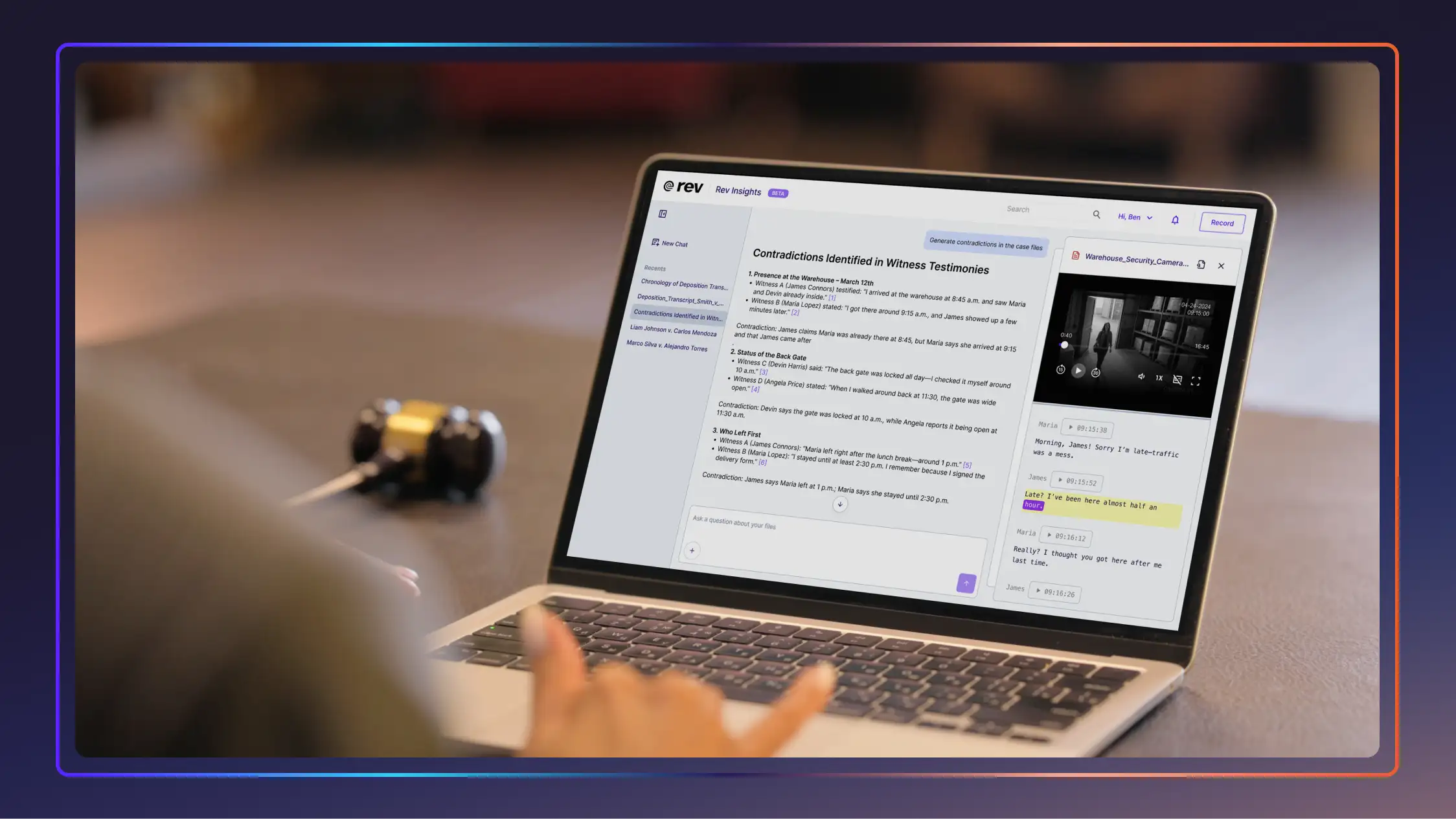

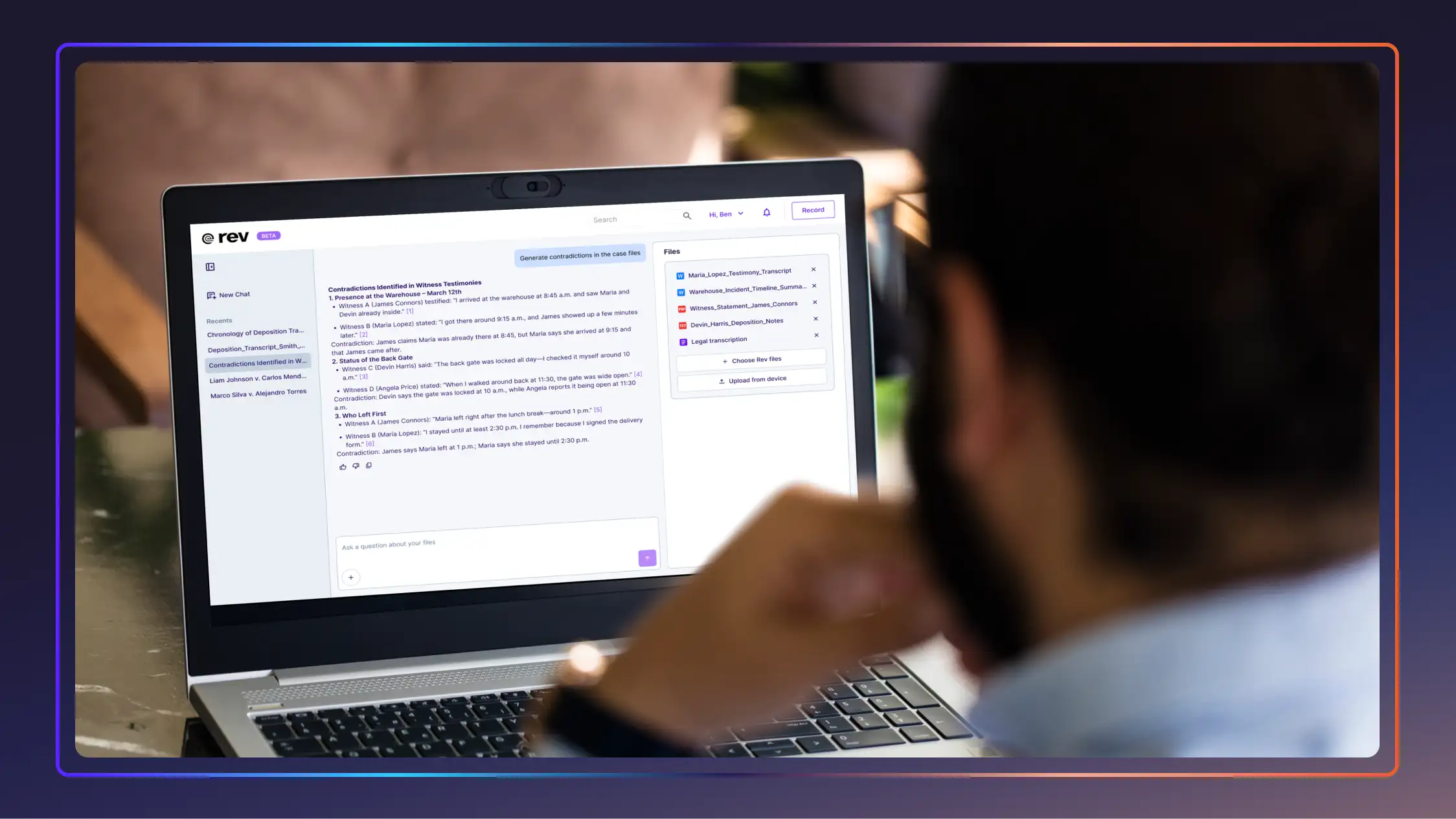

10 Legal AI Tools That Actually Help Lawyers

From evidence and contract analysis to research and drafting tools, these 10 legal AI tools will help you work faster without sacrificing accuracy.

Your Guide To Accessibility in Higher Education

With new accessibility deadlines looming, here’s Rev’s guide to getting your school, lectures, and learning materials up to ADA and WCAG guidelines.

ADA Website Compliance: What Site Owners Need to Know

Learn about upcoming ADA website compliance deadlines for schools, public agencies, and site owners. Plus, download our checklist to ensure your site is accessible.

The Real Impact of AI for Personal Injury Lawyers in 2026

From medical transcription to evidence analysis, AI for personal injury lawyers can streamline your workflow and raise your bottom line. Here’s how!

54 Personal Injury Statistics: Settlements, Injury Data, & More

Check out data on accident rates, settlement averages, personal injury technology, and more with over 50 unique stats on the personal injury industry.

.webp)

Anatomy of a Winning Demand Letter (+ Free Demand Letter Template)

Write stronger demand letters with clear steps, examples, and a free template. Learn how attorneys build persuasive settlement demands.

Survey: 48% Say AI In Legal Education Delivers An Advantage

New survey of legal pros shows AI's rise in legal education. Nearly half say it gives students a career edge, while accuracy and ethics outrank speed and cost.

7 Deaf TikTok Creators You Can’t Miss

Learn about the deaf challenges, contributions, and culture from a vibrant community of creators on TikTok. Here are a list of 7 creators to check out.

Rush Legal Transcription Services: Fast, Accurate, & Court-Ready

Need transcripts fast? Compare the best rush legal transcription services for same-day, overnight, and court-ready delivery. See pricing and turnaround times.

Solo Law Firm Software to Ease Your Workload

Find the best law practice management software for solo attorneys. Streamline billing, trust accounting, and case prep with modern tech.

.webp)

TrialKit vs. Rev (And Other Alternatives)

TrialKit is a useful tool for the discovery phase. How does it stack up vs. Rev and other alternatives to streamline the discovery phase? We break it down.

49 Criminal Justice Statistics for the United States

The American criminal justice system is vast and ever-changing, with technology and reforms being introduced every day. Let’s look at some key criminal justice data.

54 Lawyer Statistics + Facts That Might Surprise You

Lawyer statistics ranging from demographics to legal technology to future trends are collected in this comprehensive look at 54 legal facts by Rev.

Beat Lawyer Anxiety: How To Stop Worrying About Court

Attorney anxiety is a natural response to high-stakes legal work. Hear how real-life lawyers reduce their stress before court and boost their confidence.

AI Transcription Services With Free Trials: Find Your Match

Learn which AI transcription services you can try for free, so you can find the right service for your needs! Click to read the guide from Rev.

Overcoming Common Evidentiary Issues In Criminal Defense

Explore evidentiary problems, from hearsay to missing foundation, and how criminal defense teams challenge the prosecution under the Federal Rules of Evidence.

Insurance Transcription: 5 Services for Claims + Compliance

Take a look at the top insurance transcription services that understand insurance workflows and can handle even the most sensitive investigation recordings.

Top Legal AI Software Worth The Investment

Raise your firm’s productivity and maximize billable hours with these top 9 legal AI software companies that are worth the investment.

Reasonable Doubt: Definition, Burden, And Strategies

Understand what reasonable doubt means, the prosecution’s burden of proof, and how defense attorneys identify doubt in complex criminal trials.

Upgrade Police Evidence Management With Technology

Inefficient police evidence management isn't just a workflow issue—it's a barrier to justice. Luckily, technology is here to help. Click to learn more.

Cybersecurity For Law Firms: How To Avoid A Breach

Learn how to improve your law firm’s cybersecurity with key threats, compliance rules, and 10 practical strategies to protect sensitive client data.

Policy Limit Investigations + How To Maximize Recovery

Understand policy limit research in personal injury cases and learn how to identify coverage, strengthen strategy, and maximize recovery.

10 Legal AI Tools That Actually Help Lawyers

From evidence and contract analysis to research and drafting tools, these 10 legal AI tools will help you work faster without sacrificing accuracy.

.webp)

Anatomy of a Winning Demand Letter (+ Free Demand Letter Template)

Write stronger demand letters with clear steps, examples, and a free template. Learn how attorneys build persuasive settlement demands.

Beat Lawyer Anxiety: How To Stop Worrying About Court

Attorney anxiety is a natural response to high-stakes legal work. Hear how real-life lawyers reduce their stress before court and boost their confidence.

Overcoming Common Evidentiary Issues In Criminal Defense

Explore evidentiary problems, from hearsay to missing foundation, and how criminal defense teams challenge the prosecution under the Federal Rules of Evidence.

Reasonable Doubt: Definition, Burden, And Strategies

Understand what reasonable doubt means, the prosecution’s burden of proof, and how defense attorneys identify doubt in complex criminal trials.

Upgrade Police Evidence Management With Technology

Inefficient police evidence management isn't just a workflow issue—it's a barrier to justice. Luckily, technology is here to help. Click to learn more.

.webp)

Best Harvey AI Alternatives for Attorneys (+Accuracy Comps)

The best legal AI tools help attorneys address specific needs, optimize firm workflows, and improve client cases. See how these Harvey AI competitors compare.

Legal Subscription Services Better Than ChatGPT Wrappers

Legal subscription services can’t just be ChatGPT in shiny wrappers; they need to truly understand and integrate with what attorneys need. Here’s our guide to the best.

The Police Shortage Crisis: Can AI Bridge The Blue Line?

Learn how AI tools are addressing the police officer shortage by helping with digital evidence management, law enforcement transcriptions, and more.

Cross-Examination: Questions That Control The Courtroom

In this guide, we explore the most effective cross-examination question types and share advanced techniques to help you command the courtroom with confidence.

Creating a Digital Trial Notebook: From Chaos to Confidence

Step-by-step guidance for building a digital trial binder that improves organization, speeds up prep, and supports a more efficient trial strategy.

2026 Legal Technology Trends That’ll Define The Next Decade

New legal technology will fundamentally change how legal work gets done and what it takes to stay competitive in the next decade. See the top trends for 2026+ here.

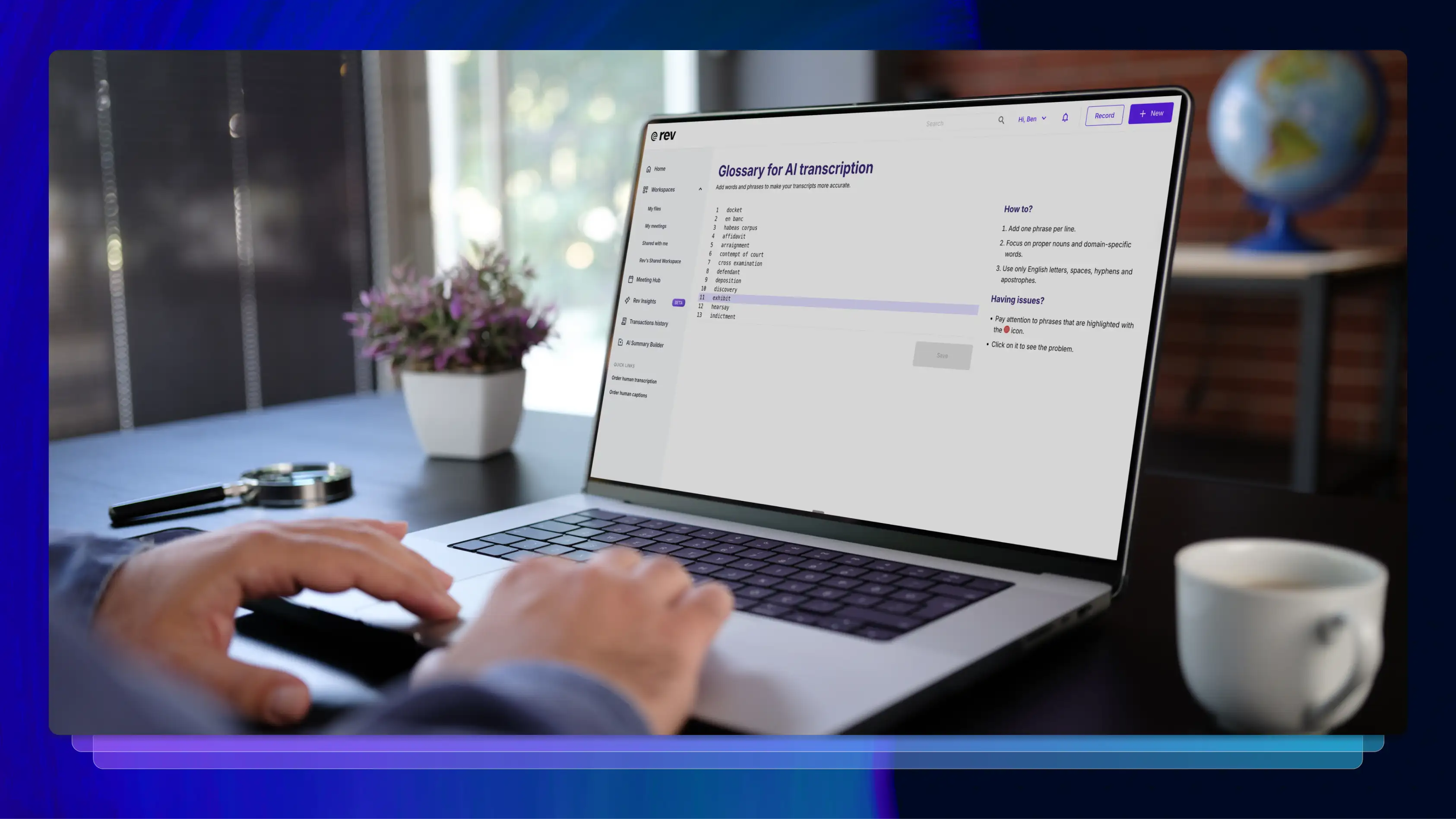

100+ Legal Terms + How A Transcript Glossary Improves Accuracy

Explore 100 essential legal terms and learn how Rev’s custom glossaries improve transcript accuracy, consistency, and efficiency for legal teams.

.webp)

11+ Legal Tech Conferences to Attend in 2026

Legal tech conferences can introduce your firm to new, cutting-edge technology that will help you deliver better results at a faster pace. Let’s look at some important upcoming law events.

Why Legal AI Needs Better AI Architecture, Not Generative Guesswork

Click to learn how closed-loop AI architecture can significantly reduce hallucination risk vs. generative AI guesswork in high-stakes legal work.

Digital Forensics Tools to Help Beat Evidence Overwhelm

Rev’s breakdown of tools for gathering and organizing digital evidence covers a wide range of cyber forensics software and free digital forensics tools.

Time Management For Lawyers: How Trial Teams Can Work Faster

Better time management for lawyers can prevent burnout, improve efficiency, and increase firm profitability. Learn how to work faster with these tips and tools.

Law Firm Efficiency + Productivity Hacks

Small changes in how you work can create huge differences in your law firm's efficiency. Reclaim hours and deliver better outcomes with our guide.

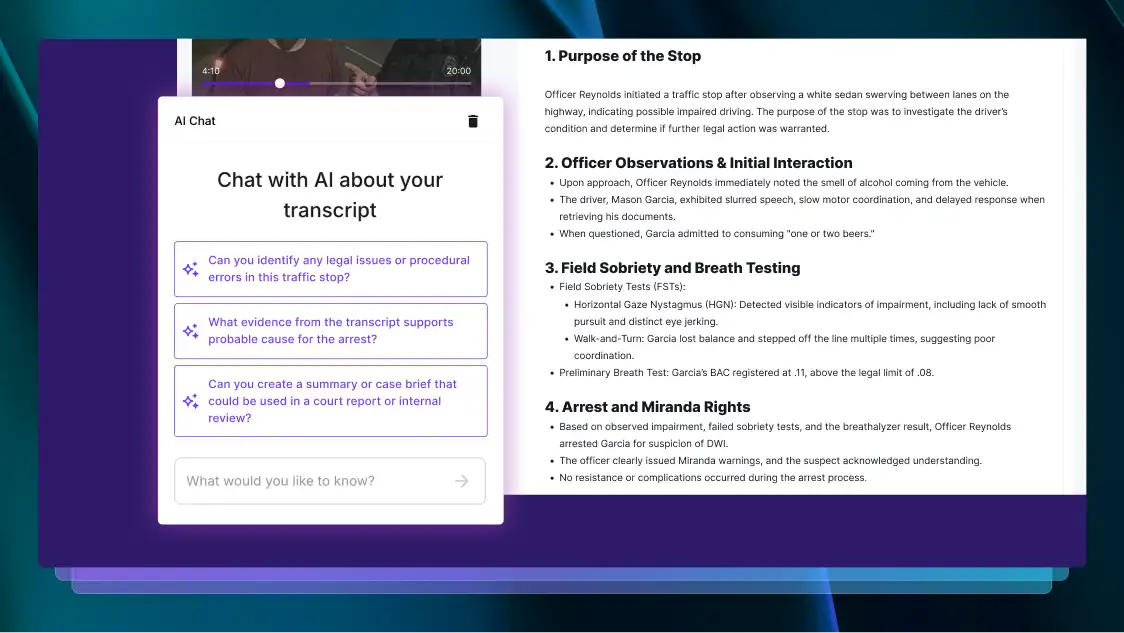

DUI Defense Strategies + Using AI to Investigate

There are a variety of DUI defenses available for defense attorneys. Explore the most effective defense strategies and learn how AI can help you work faster.

Private Investigator Software + Tools to Find Answers Fast

Rev investigates the best private investigator software options and detective tools available to help PIs find insights and solve cases faster.

Admitting a Deposition Transcript Into Evidence: What to Know

Learn the steps it takes to admit a deposition transcript into evidence, common reasons for dismissal, and how tech expedites the process from the experts at Rev.

Digital Evidence Management Tips to Beat Court Backlog

Digital evidence management systems are critical for collecting and managing key case evidence. Here’s how prosecuting teams can beat the court backlog with effective DEMS.

54 Personal Injury Statistics: Settlements, Injury Data, & More

Check out data on accident rates, settlement averages, personal injury technology, and more with over 50 unique stats on the personal injury industry.

Survey: 48% Say AI In Legal Education Delivers An Advantage

New survey of legal pros shows AI's rise in legal education. Nearly half say it gives students a career edge, while accuracy and ethics outrank speed and cost.

49 Criminal Justice Statistics for the United States

The American criminal justice system is vast and ever-changing, with technology and reforms being introduced every day. Let’s look at some key criminal justice data.

54 Lawyer Statistics + Facts That Might Surprise You

Lawyer statistics ranging from demographics to legal technology to future trends are collected in this comprehensive look at 54 legal facts by Rev.

Survey: 65% Use AI Legal Advice, But Accuracy Concerns Remain

New data reveals how Americans use AI for legal questions, why they still rely on lawyers, and what law firms can do now to build safer AI-ready workflows.

Heavy AI Users Face 3x More Hallucinations and Spend 10x Longer to Get Answers

Survey insights show heavy AI users face 3x more hallucinations, take 10x longer for satisfaction, and struggle most with AI prompting despite their experience.

85% Believe Prompting Will Be a Must-Have Job Skill in the AI Era

A new survey reveals Gen Z treats AI prompting as career prep, while employer training boosts efficiency 20%. See how generations approach this emerging job skill.

58+ Chatbot Statistics That Demonstrate The Future of AI

The popularity of AI chatbots for businesses and beyond doesn’t seem to be slowing down. Let’s look at some statistics that cover the popularity, use cases, and future of AI chatbots.

60% of Americans Get Their Legal Knowledge From Media Consumption

A new survey reveals what Americans think lawyers do, spotlighting the growing need for solutions that close the gap between legal reality and public perception.

4 in 5 Legal Professionals Are Burned Out: Can AI Be the Lifeline?

New report: 4 in 5 lawyers experience burnout. Learn how overwhelming workloads lead to stress and how AI is helping legal professionals find balance.

2025 Court Reporting Industry Trends: What Lawyers and CRAs Need to Know

Understand the stenographer shortage, the rise of digital solutions, and key insights from the AAERT report. Plus, learn how Rev’s tech can help.

The 2025 Legal Tech Survey

Discover key insights from Rev's comprehensive 2025 Legal AI Survey revealing how the profession is embracing AI.

Rev + ASR: An In-Depth Look

Explore key insights from Rev's State of ASR Report, highlighting accuracy, benchmark results, and trends in speech technology.

40 Meeting Statistics That Showcase New Trends in Meetings

Let’s look at some must-know meeting statistics to learn more about the advantages of meetings and when they’re unnecessary.

36 YouTube Stats, Facts, and Figures to Know in 2026

YouTube is one of the most influential social media platforms around. We’ve compiled some must-know YouTube stats to inform your strategy.

46 Video Marketing Statistics: What Is the State of the Industry In 2026?

How effective is video marketing? Discover key video marketing statistics—from engagement rates to ROI—to inform your 2026 strategy.

Rev Improves Accuracy by Over 30% with Launch of New v2 ASR Model

Rev's new automatic speech recognition (ASR) model is 25% more accurate than our existing model. Learn more about this technology today.

The Ultimate Roundup of Compelling Closed Captions Statistics

Whether you're looking to imrpove accessibility, boost SEO, or promote engagement, these statistics prove the benefits of closed captions.

Open vs. Closed Captions: What's the Difference? Why Does It Matter?

Understand open captions vs. closed captions, how each supports accessibility, and which option works best for your video content.

How to Record Audio on an iPhone in Three Different Ways

Learn how to record on iPhone using Voice Memos and other simple methods to capture audio, interviews, and conversations with better clarity.

Transcript to Trial: Leveraging Deposition Technology

Discover how firms are saving 12 hours per week and per attorney by switching to deposition technology with this whitepaper from Rev. Click to download.

Building a Speech Recognition System vs. Buying or Using an API

Pros and cons of building your own speech recognition engine or system vs using a pre built service or API that converts speech to text

Guide to Speech Recognition in Python with Speech-To-Text API

Learn how to add speech recognition to applications, software, and more using Python and our Speech to text API

Client Communication Mastery: Explaining AI To Traditional Attorneys

Learn how to strategically communicate the value of legal AI tools to traditional attorneys and clients with this expert led webinar from Rev

Get Started With Rev

Check out our guides to learn how Rev’s platform works Whether you learn best by watching, reading, or following along step by step, we’ve got you covered

Legal Bottlenecks & The Future of Justice

Evidence backlogs are threatening case outcomes, but luckily, AI can help bridge the gap Our whitepaper explores how AI can revolutionize the legal practice

Unlock Case-Winning Insights

In this webinar from Rev, learn how to implement AI powered depo summary technology in your workflow and how it can benefit your legal strategy

Top Transcription Companies for 2026 Needs

Looking for a transcription service to use in 2026? Learn more about the features, benefits, and costs of the top transcription companies.

Bridging the Justice Gap: How Digital Court Reporting Expands Access to Legal Proceedings

Discover how the steno shortage is impacting the justice system and how digital court reporting can bridge the gap with this white paper from Rev.

What is an SRT File?

Wondering what an SRT file is and how to create one? Rev’s SRT file guide explains everything, including how to add captions and subtitles to your video.

Implementing Digital Court Reporting to Scale Your Legal Services

Learn how to implement high-quality digital court reporting solutions to meet client demands with this Webinar from Rev. Click for more.

Maintaining Attorney-Client Confidentiality with Modern Speech Tech

Discover how to leverage AI powered speech technology in your legal practice while maintaining attorney client privilege

Free Transcription Tools That Make Your Life Easier

Explore Rev’s free transcription tools and see how to convert audio or video into text online—accurate, fast, and easy to use.

Protecting The Record In The Technology Age

Is your legal data truly secure in the digital age Rev explores strategies for protecting court reporter data with an expert panel in this webinar

Building Stronger Cases Through AI Speech Technology

Download our whitepaper to see how you can build stronger arguments, save time, shore up security, and much more with legal AI technology

Legal Leadership Summit in Austin

Join Rev in Austin, Texas for the 2025 Legal Leadership Summit, and gain access to tailored sessions featuring top industry experts

Shape The Future Of Legal Tech

Join an elite group of innovators shaping the future of legal tech through quarterly meetings, product feedback, and exclusive benefits Apply today

The Modern Research Challenge: How AI Can Transform Workflows

Discover how AI and speech technology are transforming market research Learn to capture, analyze, and leverage conversations to deliver deeper insights faster

The Digital Court Reporting Revolution: Five Critical Trends Reshaping CRAs in 2025

Discover five critical trends transforming Court Reporting Agencies in 2025, from AI powered speech recognition to enhanced security standards in digital documentation

Secure Speech Tech for Law: AI Without the Risk

Learn how to integrate AI into your law firm, how to ramp up your data security, and much more with this whitepaper from the experts at Rev

Legal Advocacy Webinar

Join Rev for a webinar exploring the evolving landscape of court reporting laws and digital transformation, and how law firms can stay ahead of the game.

7 Best Free Speech-to-Text Apps for iOS

Rev’s guide to the 7 best free speech to text transcription apps for iOS covers everything from the new Rev platform to Apple Voice Control and Dictanote.

White House Cabinet Meeting 5/27/26

Donald Trump and his White House cabinet hold a meeting on 5/27/26. Read the transcript here.

Anti-Fraud Roundtable

J.D. Vance hosts an anti-fraud roundtable with the FTC chair and state Attorneys General. Read the transcript here.

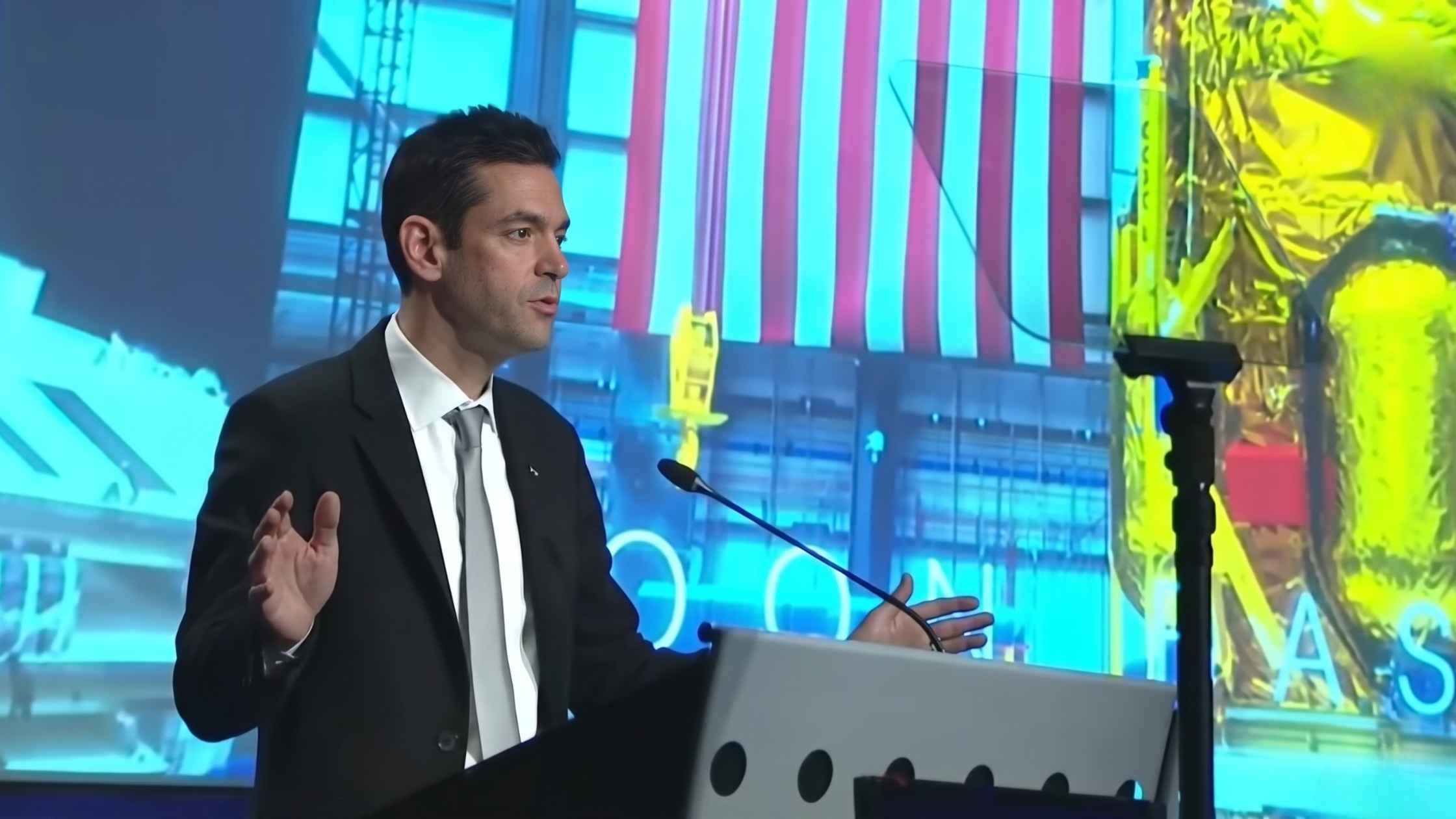

Moon Base Announcement

NASA shares plans to construct a permanent base on the Moon. Read the transcript here.

Ken Paxton and John Cornyn Post Runoff Speeches

Ken Paxton and John Cornyn speak after the Texas Senate primary runoff. Read the transcript here.

Pope Leo XIV on AI

Pope Leo XIV and others speak on the care of human dignity in the era of artificial intelligence. Read the transcript here.

Memorial Day at Arlington

Memorial Day observance at Arlington National Cemetery. Read the transcript here.

Marco Rubio Visits India

Secretary of State Marco Rubio and India’s External Affairs Minister Subrahmanyam Jaishankar hold a press conference in New Delhi. Read the transcript here.

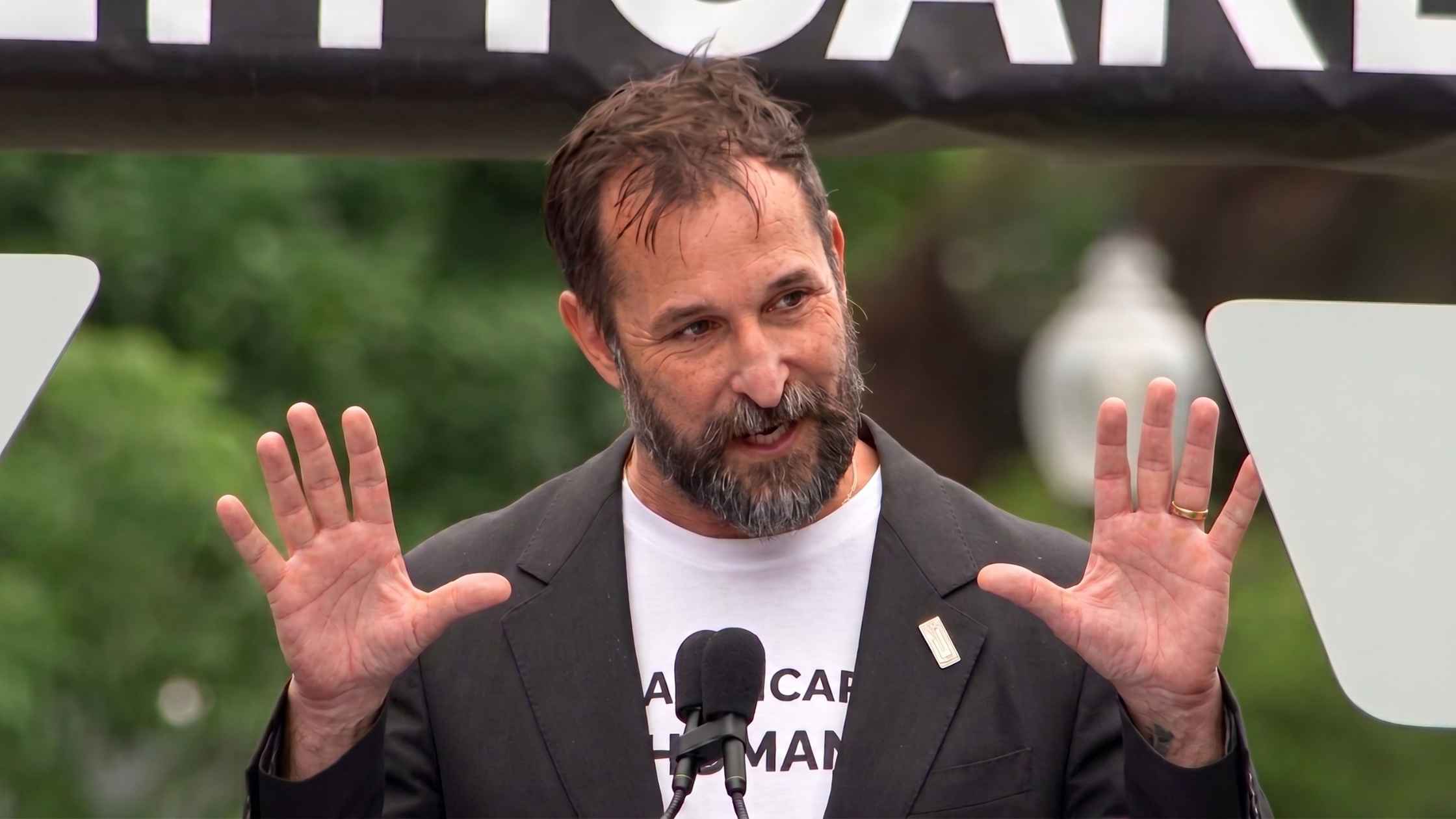

“Healthcare Is Human” Rally on Capitol Hill

Actor Noah Wylie speaks at the “Healthcare Is Human” rally on Capitol Hill. Read the transcript here.

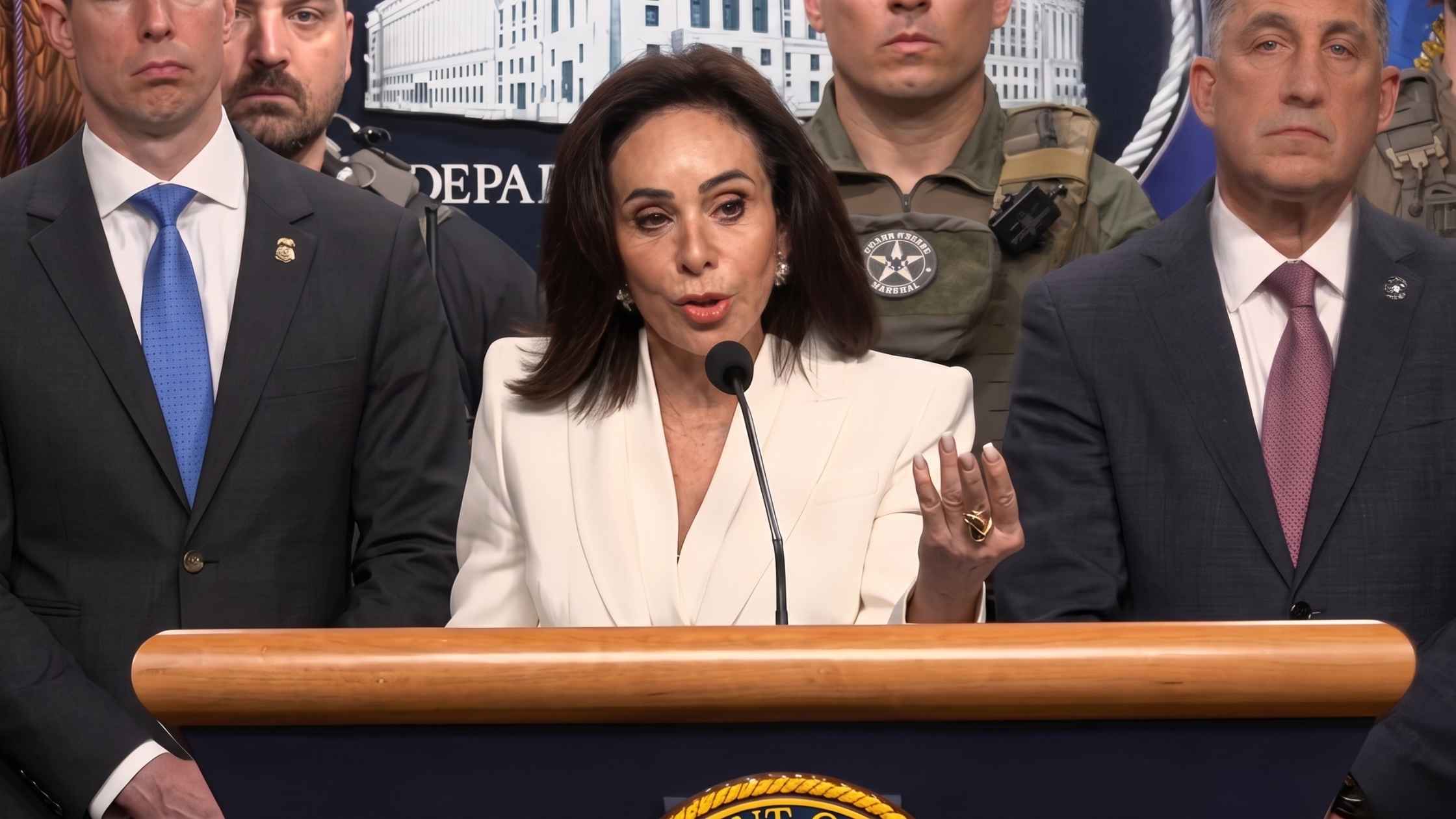

Anti-Fraud Efforts in Minnesota Announced

DOJ, RFK Jr., and Dr. Oz unveil record Minnesota Medicaid fraud crackdown. Read the transcript here.

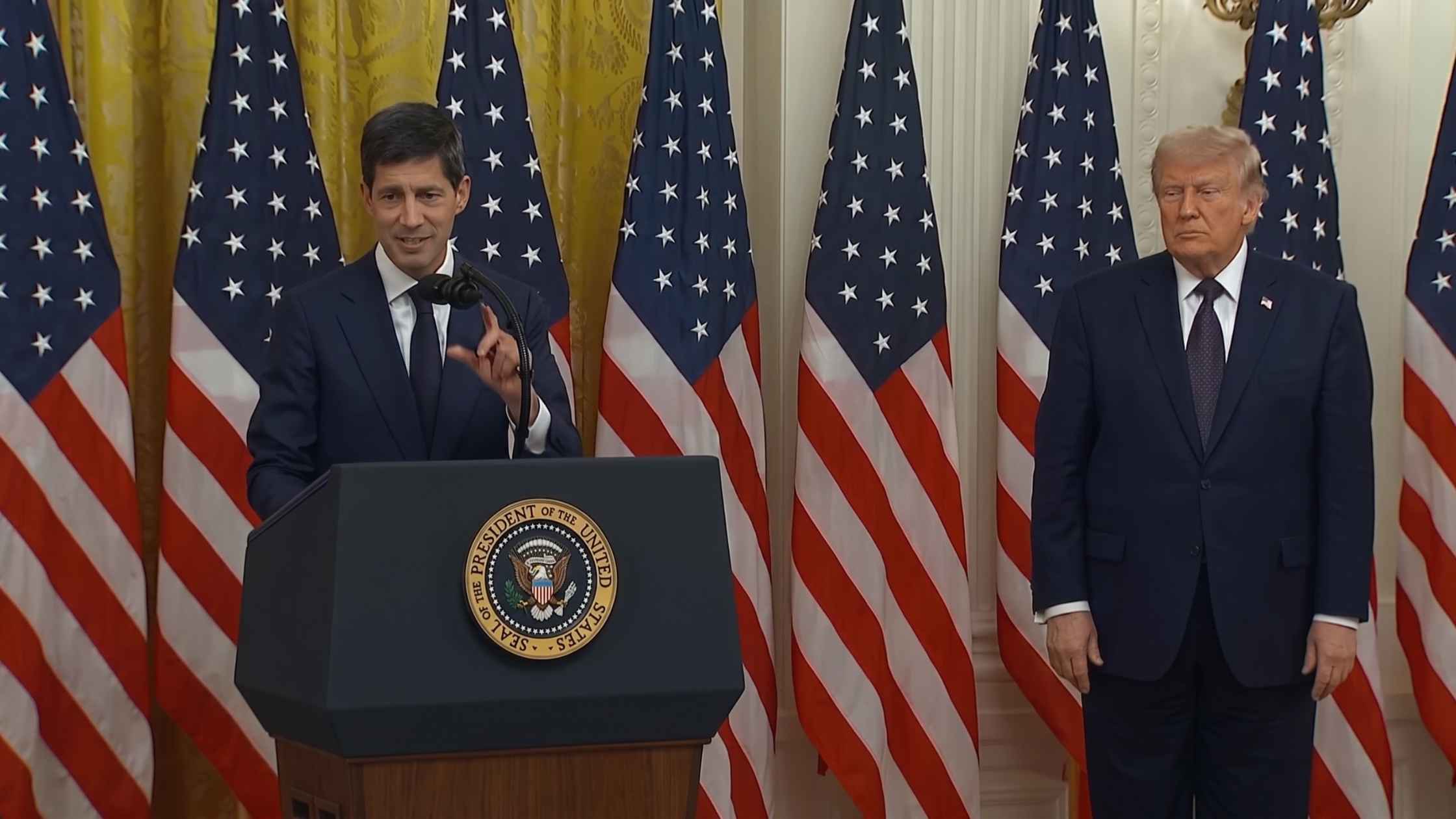

Fed Chair Kevin Warsh's Swearing-In Ceremony

Kevin Warsh is sworn in as the new Fed Chair. Read the transcript here.

EPA Annoucement on Refrigerant Regulations

EPA loosens regulations on refrigerant chemicals to drive down grocery costs. Read the transcript here.

Southern Poverty Law Center Hearing

House Judiciary Committee hearing on allegations against Southern Poverty Law Center. Read the transcript here.

EU Economic Forecasts

EU economy commissioner Valdis Dombrovskis presents the economic forecasts for 27 EU countries. Read the transcript here.

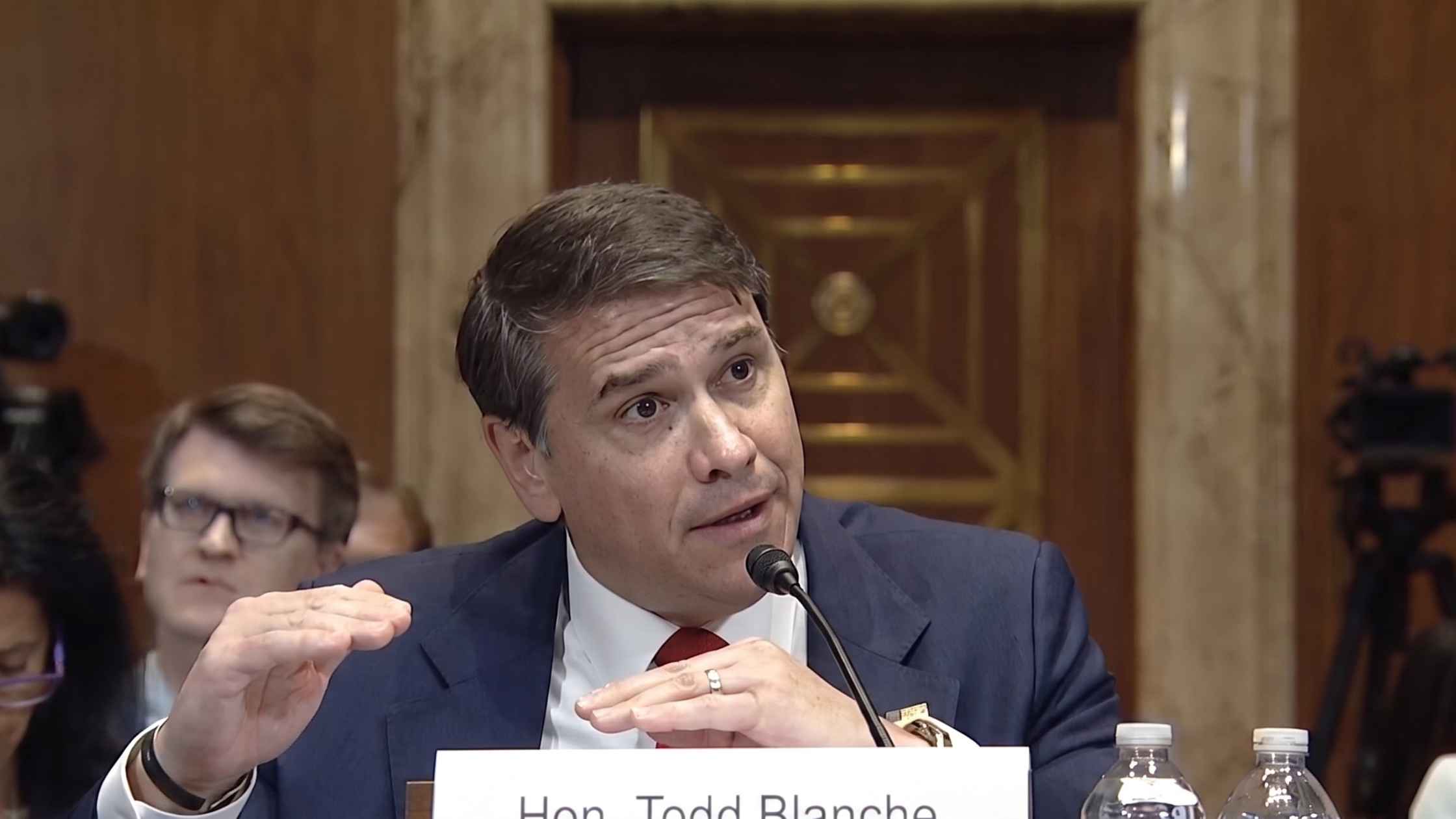

Indictment Of Cuba's Raúl Castro

Acting AG Todd Blanche holds a press briefing to announce the indictment of Raúl Castro in Miami, Florida. Read the transcript here.

U.S. Coast Guard Academy Commencement Address

Donald Trump delivers the U.S. Coast Guard Academy commencement address. Read the transcript here.

CAP Ideas Conference

Democrat leaders speak at the Center for American Progress Ideas Conference. Read the transcript here.

NATO Military Chiefs Hold Press Conference

NATO Military Chiefs hold a press conference following Brussels meeting. Read the transcript here.

DOJ Appropriations Hearing

Acting Attorney General Todd Blanche testifies before the Senate Appropriations Committee. Read the transcript here.

J.D. Vance White House Press Briefing on 5/19/26

J.D. Vance holds the White House Press Briefing for 5/19/26. Read the transcript here.

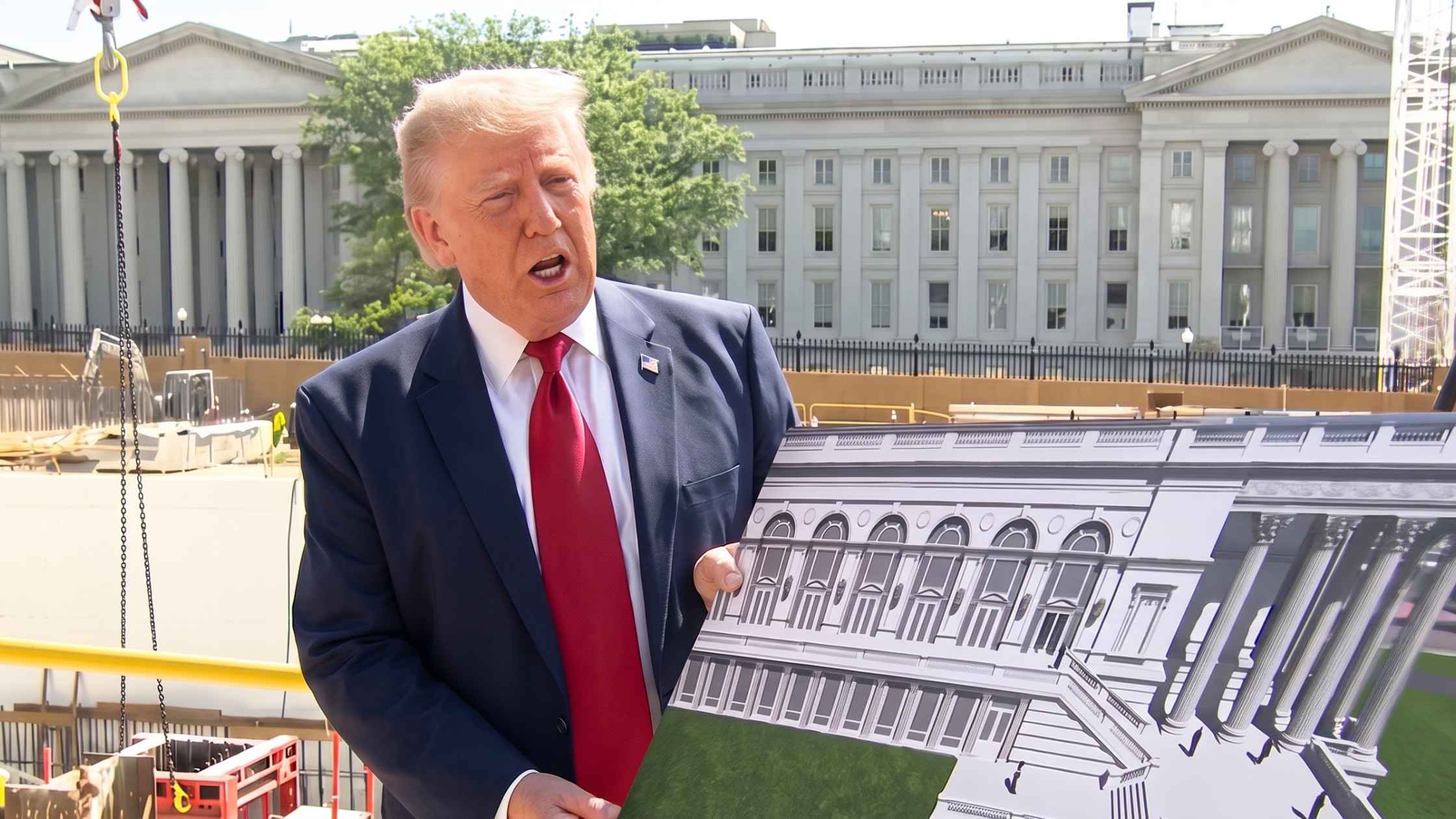

White House Ballroom Site Tour

Donald Trump visits the White House ballroom construction and takes questions from reporters. Read the transcript here.

PFAS Regulation Policy Roundtable

HHS Secretary RFK Jr. and EPA Administrator Lee Zeldin host PFAS regulation policy roundtable. Read the transcript here.

World Health Assembly in Geneva

WHO Director-General Tedros Adhanom Ghebreyesus speaks at the annual World Health Assembly in Geneva. Read the transcript here.

Islamic Center Shooting Press Conference

San Diego police, the mayor, the FBI, and the director of the Islamic Center of San Diego speak out after a deadly shooting. Read the transcript here.

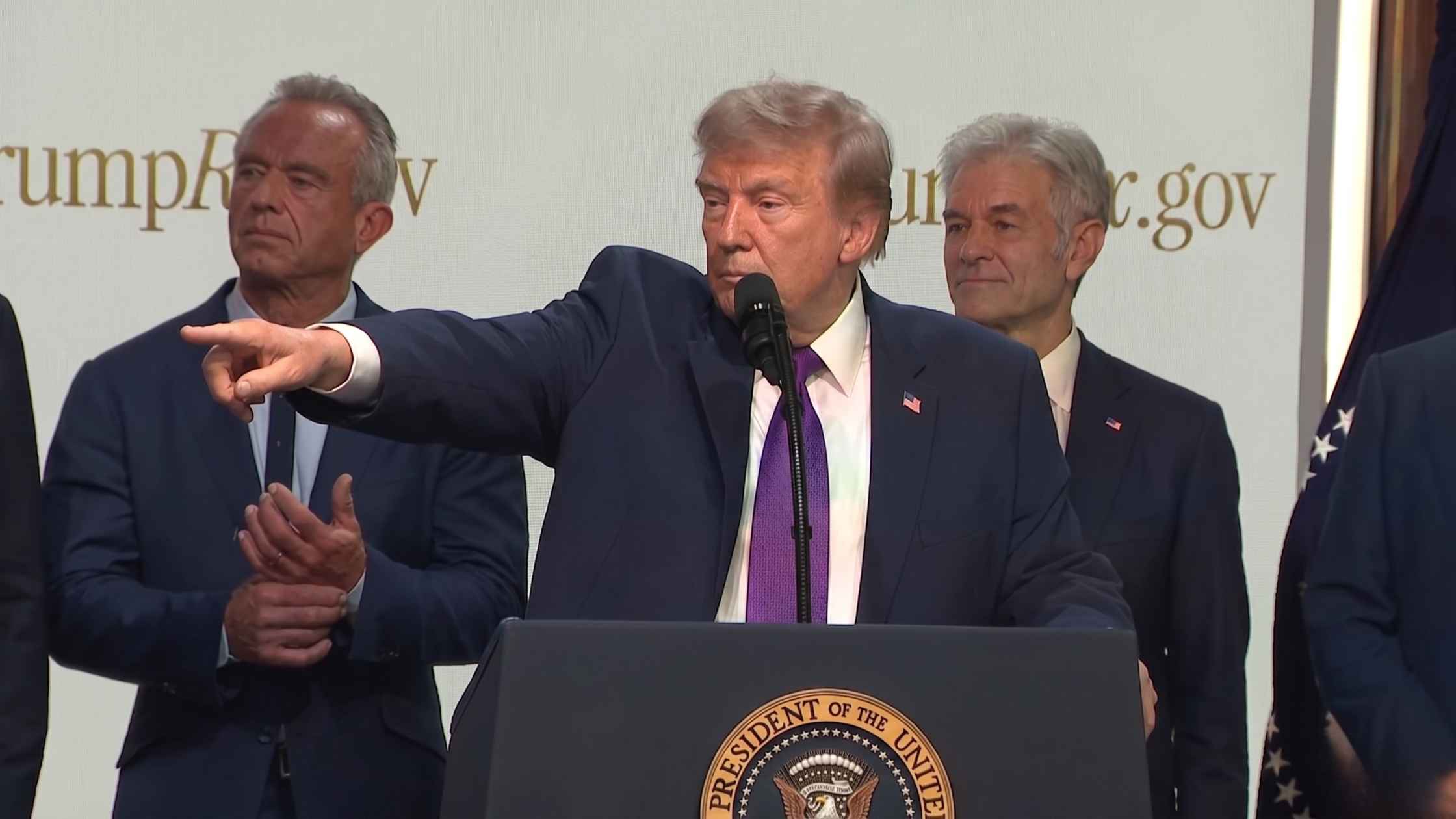

Healthcare Affordability Event

Donald Trump announces major expansions to the TrumpRx.gov program. Read the transcript here.

Sigourney Weaver Honored

Sigourney Weaver is immortalized with a handprint ceremony at Hollywood's TCL Chinese Theatre. Read the transcript here.

Military Innovation Hearing

Pentagon technology leaders testify on military innovation in House hearing. Read the transcript here.

Security Briefing for 250th Anniversary Celebrations

Federal safety officials unveil safety plans for major America 250 events this upcoming summer. Read the transcript here.

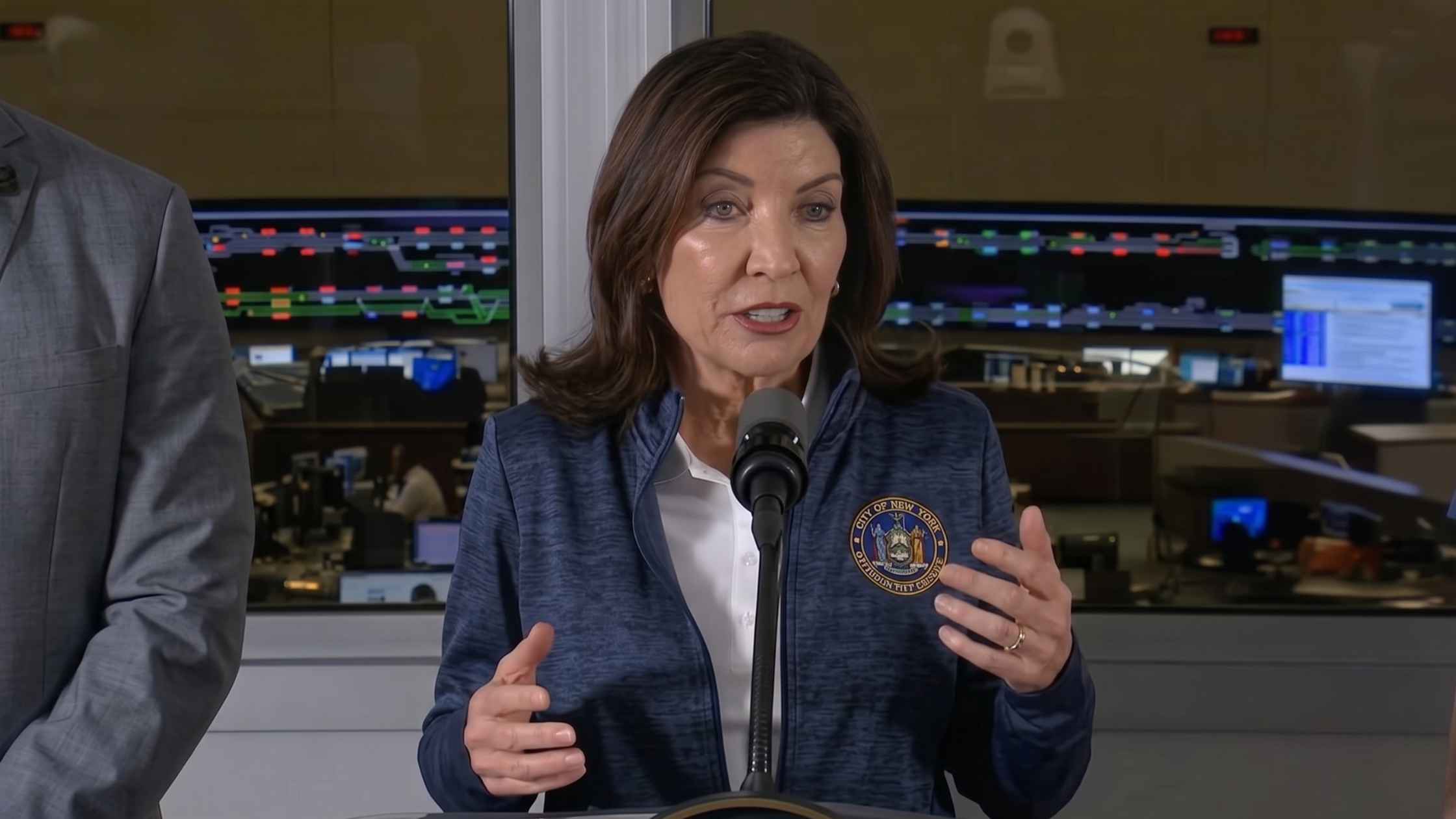

Long Island Railroad Strike

Governor Kathy Hochul holds a press conference on the Long Island Rail Road strike. Read the transcript here.

Police Week Memorial Ceremony

Immigration and Customs Enforcement holds a memorial ceremony for fallen officers. Read the transcript here.

Anti-Fraud Press Conference

J.D. Vance holds a press conference on Anti-Fraud initiatives. Read the transcript here.

COVID-19 Whistleblower Hearing

A COVID-19 whistleblower testifies before the Senate Homeland Security Committee. Read the transcript here.

Army Budget Hearing

Army Secretary Driscoll testifies on budget request in Senate hearing. Read the transcript here.

Artemis II Astronauts on Capitol Hill

The Senate Commerce, Science, & Transportation Committee hosts members of the NASA Artemis II crew at the Capitol. Read the transcript here.

Department of Defense Budget Hearing

Pete Hegseth and Dan Caine testify on the DOD budget request before Congress. Read the transcript here.

Epstein Survivors Testify Publicly Before House Committee

The House Committee on Oversight and Government Reform hears from Epstein survivors. Read the transcript here.

Ball State University Commencement

Actor Hugh Jackman delivers the 2026 commencement address for Ball State University. Read the transcript here.

Subscribe to The Rev Blog

Sign up to get Rev content delivered straight to your inbox.